Relation Extraction (RE) aims to detect semantic relationships between entity pairs in natural texts and has proven to be crucial in various natural language processing (NLP) applications, including question-answering and knowledge-base (KB) population.

Typical RE methods follow a supervised approach but require a large number of labeled training data, which is rarely available. For that reason, recent studies focus on distant supervised (DS) approaches that automatically generate a large amount of training data by aligning facts in a KB with sentences mentioning these facts. Even though DS effectively scales RE to larger corpora, it suffers from noisy labels, while existing approaches manage to recognize mainly the top frequent relations.

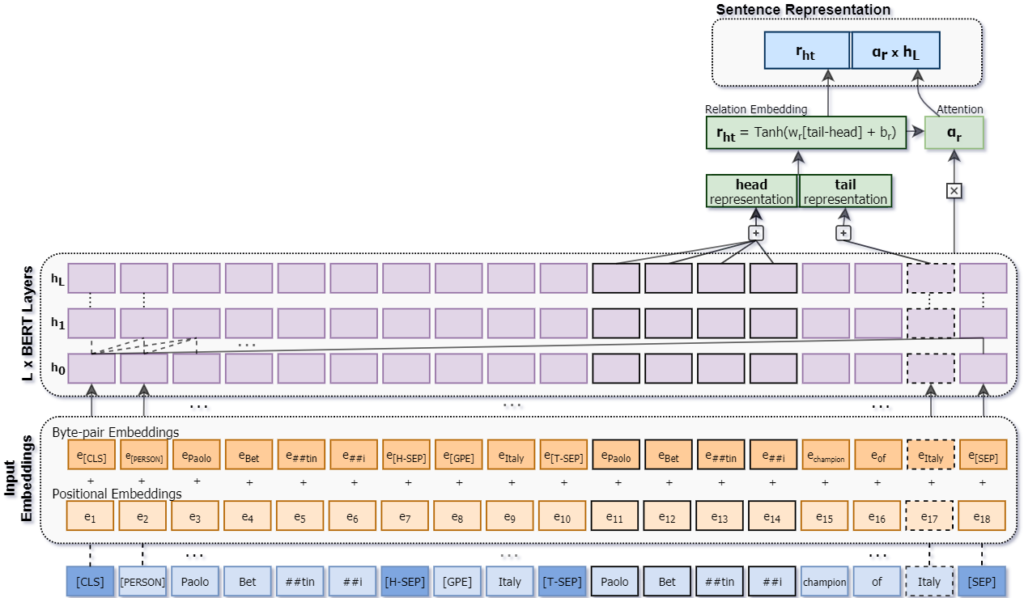

Our paper “Improving Distantly-Supervised Relation Extraction Through BERT-Based Label and Instance Embeddings“, which was recently published in IEEE Access, provides a novel distantly supervised RE method that efficiently suppresses noise and captures a broader set of relations. We achieve this by guiding our model, REDSandT (Relation Extraction with Distant Supervision and Transformers), to focus solely on relational tokens by fine-tuning BERT on a structured input, including the sub-tree connecting an entity pair and the entities’ types. Using the extracted informative vectors of the entity pair, we shape label embeddings, which we also use as an attention mechanism over instances to further reduce noise. Finally, we represent sentences by concatenating relation and instance embeddings with experiments in the two benchmark English datasets to present state-of-the-art results. The following figure shows the sentence representation in REDSandT. Our code, trained models and data are publicly available.

Sentence Representation in REDSandT

Future work includes extending this work for the scope of the ECARLE project to extract relationships from Greek corpora that exhibit the same limitations addressed in this work; namely, the corpus is constructed through distant supervision by aligning given relational facts to Greek texts while relations are highly imbalanced.